by Andrew Bengoku

“Our mind is a collection of electrochemical processes taking place in the brain enabled by molecular structures unique to each individual.” This statement is so general and “high level” that it’s hard to disagree with. But what exactly does it mean when trying to understand the nuts and bolts of brain function and the mind? In other words, if we want to bring this statement into the realm of scientific investigations, we should be able to be more specific. For example, one should at least be able to define the descriptive level(s) considered relevant for the investigations. In this blog post, we’ll discuss a hypothetical experiment shedding light onto the issue of descriptive levels. In discussing these topics, we’ll use examples from machine learning and artificial neural networks, emphasizing the great promise they hold as models of brain function and, more generally, as tools for revealing the mysteries of the mind.

In neuroscience research, there are many descriptive levels, perhaps too many! Research in this field spans a formidable range of spatial and temporal scales, from genetics and small molecules to the whole brain or body. To understand whether a specific level of analysis is more informative than others, it is convenient to examine the “extremes” of these levels.

At one extreme, that of the very small spatial scale, we end up talking about atoms deemed as the fundamental descriptive level. According to this view, only by understanding how each and every atom in the brain behaves and interacts with each other can we then make sense of brain computations and solve the mystery of the mind.

At another extreme, one could assume that many of the “small things” in our brain are quite irrelevant: channels, cell morphologies, axons, dendrites, peptides, ion gradients, etc. Those are just the ingredients nature could work with, but in the end, one can summarize all this stuff with simple numerical parameters that capture the “gist” of this biological mess. For example, one could state that the most critical piece of information is whether a cell is active or not, summarized with a long vector of zeros and ones, indicating whether a cell is active at any given time point.

So, where does the “correct” or most interesting/informative descriptive level sit between these two extremes? In this post, we do not answer this question, rather, we’ll try to sell you a mug with the BioFixation logo. Kidding. We will try to rule out the latter extreme scenario, the one about just knowing the zeros and ones of cell activations: This is still progress, right?

The latter extreme scenario suggests that we “just” need to know the on-off state over time of a subset of cells to capture the mind of an individual — in biology, “on or off” means whether or not a neuron is emitting an action potential and we already have the technology to capture this information in relatively small neuronal ensembles, so we would just need to scale it up: potentially good news!

However, here’s a hypothetical experiment casting some doubts on this scenario, suggesting we should probably consider a few more details.

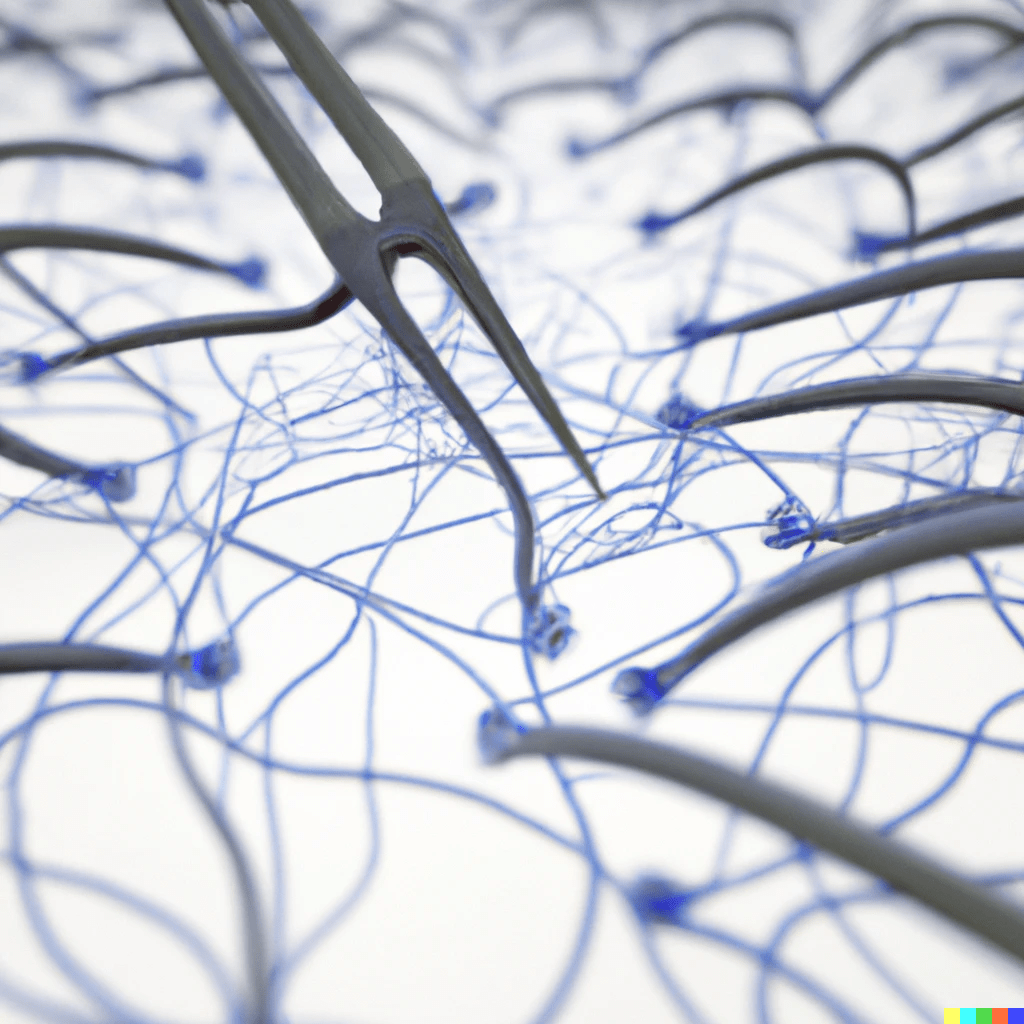

Suppose some formidable nanotechnology becomes available allowing us to do three quite interesting things. One is to send nanobots to each and every cell and cut all connections between cells; a cell typically receives 10k -15k inputs, up to 50k sometimes, and mostly from nearby cells. These nanobots perfectly seal the cuts and also encapsulate the cells within a nourishing cocoon to ensure they remain alive and healthy as if nothing happened (nanobots will have to cut off glia, blood vessels, etc, but this is a formidable technology!). The second piece of amazing technology is laser-based, allowing the targeting of individual cells with lasers to activate them or turning them off with nanosecond precision (similar to currently available optogenetic-based methods, but on steroids). The final ingredient is the possibility of recording the activity of all cells also with nanosecond precision, which is also somewhat currently available in relatively small networks.

Now, with this technology in place, we can run the following experiment: we ask a person to look at a photo of a crowded city center, Times Square for example, and ask this person to look at it for 30 seconds trying to catch as many details as possible. At the same time, we record the activity of all neurons in the brain. We then send in the nanobots, cut all connections, and reactivate the neurons with laser beams for 30 seconds, repeating the exact same sequence of activations we previously recorded. The question is: during the 30 seconds of laser reactivation, would the person experience identical visual percepts, vividly “seeing” all details as when looking at the actual photo the first time? If the mind, and in this case, the visual experience and awareness of Times Square, is the activity of neurons over time, then the answer should be a resounding yes!

However, note that at this point the specific geometrical arrangement and position in space of the neurons play no role. We severed all connections and encapsulated neurons in cocoons so it doesn’t really matter where these cells are, as long as they are alive and healthy; what matters, according to the hypothesis we are testing, is the sequence we use to activate them. As a matter of fact, because of the nourishing cocoon, they don’t even need to be inside the skull. We could take the cells out of the skull, align them along a ring (or any other shape) in the experimental room, and excite them according to the recorded sequence. And because laser light travels quite fast, we could even make a ring shape in Earth orbit, circling the planet, with healthy and happy cells thanks to the cocoon, again exciting them according to the recorded sequence, with nanosecond resolution. Would then these excited cells orbiting the planet have a perceptual experience of Times Square?

It seems quite unlikely this orbital ring of cells would have any perceptual experience at all, but why?

At which stage of the hypothetical experiment do we lose the possibility of inducing a perceptual experience?

We can gain some insight by bringing into this discussion artificial neural networks (aNNs).

An explanation of the workings of aNNs is beyond the scope of this blog post. What suffices to know here is that these networks use principles quite similar to those of biological neuronal networks to perform formidable computations, often exceeding human abilities in very specialised tasks. Instead of neurons, they have “units” which can be activated, sending signals to other units. This communication is selective, with some units communicating more vigorously with some units and less with others. It is through this selective (or “weighted”) communication between units (and a few other details) that aNNs perform amazing computations. This is quite reminiscent of our super-simplified working hypothesis, where the mind is the product of the chattering of neurons in the brain (or a subset of them) — BTW, it’s no coincidence these nets are called artificial “neural” networks.

Let’s now take one of these networks, one that is very good at “seeing”. In recent years progress in machine learning has generated networks surpassing human abilities in recognizing what’s shown on various images (semantic classification). We can then repeat our experiment using this aNN instead of the brain. We show the network the same image of Times Square and record the activations of the units — it won’t need 30 seconds to sort out all the details, it’ll do it almost instantaneously — it might take a bit longer if the network architecture includes recurrent and feedback connections, but these are irrelevant details for this discussion. Then, we send in the nanobots to cut all connections (no need for cocoons this time; we keep the units ‘alive’ in the code) and finally reactivate the units according to the recorded sequence. This network will be completely dead, computationally speaking, killed by the nanobots severing all connections. And here’s the key point: The only reason why this network could ‘see’ (produce activations leading to a meaningful classification output) was because of the selective communication between units—or the connectivity weights—which had been fine-tuned via a training procedure that exposed the network to thousands, possibly millions of example images. The nanobots effectively undid the training by setting all connections to zero. We can certainly activate units in the network according to specific sequences, but the network itself is just a useless clump of unconnected elements; it has lost all its computational power.

Going back to the brain, it should now be clear that isolated neurons in cocoons are nothing more than a bunch of lonely cells thrown into some ‘container’, the skull; they are kept alive, but they cannot function collectively as a network. Their ability to perceive or perform any brain computation is gone. Therefore, in our experiment, the ability to perceive was lost when we sent the nanobots in, severing all connections.

That’s not only when we abolished any visual perception; that’s when we killed the mind of the person!

Indeed, this hypothetical experiment can be extended beyond visual perception to any other sensory, motor, or cognitive phenomena. The bottom line remains: the sequence of neuronal activations is certainly important, but it is not enough. It is necessary, but not sufficient; the other necessary ingredient is having this sequence emerge from selective communication between neurons.

Are then these two conditions enough (necessary and sufficient) for the mind to emerge? This is an open scientific question and in our view, it is quite amenable to be tackled using artificial neural networks designed to mimic (structurally and functionally) biological neural networks.

There are quite a few objections that could be raised in the context of this hypothetical experiment. A particularly interesting one concerns the question posed above. Let’s say that ‘seeing’ is not just the sequence of brain activity observed when looking at the photo of Times Square; let’s say that the selective communication between neurons enabling this collective activity to emerge is also key, that is, the unsevered cell-to-cell connections — again, we are sticking to vision in these examples, but similar arguments can be made for motor, memory, emotional, or cognitive processes in general. The underlying reasoning is that brain networks have been evolutionarily and developmentally fine-tuned to process (e.g., discriminate and classify) features in the visual world; not too differently from an artificial neural network trained in similar visual tasks. It is the activity of these fine-tuned networks, emerging from the selective communication between cells that constitutes (‘is’ rather than ‘relates to’) perceptual awareness. If we embrace this view, would it then be legitimate to argue that artificial networks trained in visual tasks also have some form of visual awareness? Perhaps not like human awareness since many details are quite different, but some awareness nonetheless?

This is a difficult question. It could be, for example, that the ‘substrate’ matters, with all those tiny carbon-based biological details (peptides, ions, channels, etc.) being critical and crucially missing in silico. Or that this selective cell communication is ‘read-out’ by other brain networks, and they are the substrate of conscious experience. Or, if we adopt a ‘simplistic’ working hypothesis until forced by evidence to argue otherwise, then yes, these aNNs have some sort of visual awareness…

Interestingly, some neuroscientists have been boldly going down this road, with even prominent computer scientists similarly discussing linguistic awareness in LLMs. The main hurdle is how to test for this. In humans, we simply ask. How do we test aNNs other than checking their classification output, i.e., their answers? But wait, isn’t checking answers precisely what we do with humans as well? Hmm, I’m afraid we are starting to go down a quite dark rabbit hole…

A good point to end this blog post, leaving you with an entertaining exchange with the expert, ChatGPT:

BF: Are you a large language model?

LLM: Yes, I am a large language model created by OpenAI called GPT-3.5. How can I assist you today?

BF: Are you aware of the answer you just gave me?

LLM: Yes, I am aware of the responses I generate. I use patterns learned during training to provide contextually relevant replies based on the input I receive. How can I help you further?

Leave a comment